Pictured above: Charlie enjoying a day in her pool with pool masters (and beta testers) Randy and Tabitha Savage

We realized early that it would be important to us to gather as much data about how people test and maintain their pool or spa water. Since we’re in Silicon Valley and data geeks at heart, we got inspired by an app that most of you probably use daily — Waze.

Waze is an app on almost everyone’s phones today but the most inspiring aspect of Waze is how they started. They wanted to make an application where drivers could exchange information about traffic and other road conditions. The hurdle they encountered was the GPS data required expensive subscriptions.

To circumvent this, they asked their first users to run an app while driving. To complement the raw data generating the maps, they asked other volunteers to edit those resulting maps to add street names. With numerous drivers generating the same maps and other volunteers editing the maps to ensure accuracy, a free GPS and road conditions program evolved.

As more and more volunteers contributed, the maps became more accurate. Of course, new roads spring up, detours happen with construction projects, and traffic patterns change with each hour of the day. Using Waze’s crowdsourced method, a treasure trove of data is continually processed benefitting local drivers worldwide. Although Waze was later acquired by Google, the crowdsourced data continues to be processed today.

The Wisdom of the Crowd

In the spirit of Waze, Sutro has been crowdsourcing data over the past month to eventually incorporate into our new Smart Monitor system. Specifically, we have been looking at the app since we don’t yet have testable devices (they are coming soon). The more data we can collect and process, the better we will be able to help people all over the world keep their pools and spas safe for their friends and family.

From the user point of view, app testing consists of taking manual pool readings, emailing them to us, and then looking at the app to see the recommendations. Soon, we’ll be taking this a step further and will ask for your confirmations or denials about the suitability and accuracy of those recommendations.

Along with testing, we are also collecting the chemicals people use to treat their pools so that we will be able to add these chemicals to our database and have the ability to recommend any of them in our expanding library.

Crowdsourced Results

Participation in the phase 1 beta program has exceeded expectations and the data that has been collected has generated over a dozen improvements to our system. Participants are from all over the US and Canada and have collectively completed over 200 pool readings and uploaded close to 300 pool chemicals over the last four weeks. The following graphs show the distribution of readings for each of the readings we measure:

FIrst, let’s look at the pH graph above. As you can see, the majority of readings come in between 7.4 and 7.6 which is perfect but many are significantly above and below. Also, since our lower limit is 6.8 and our upper limit is 8.4, those graph lines are a bit misleading as many of the readings are outside those limits.

Second, the Free Chlorine graph. Here you can see the majority of readings hover around the 3.5 level and the zero level. As with the pH graph, we have an upper limit here too, set at 10ppm. We’ve received some readings as high as 20ppm. Last, but not least, is the Total Alkalinity graph. This one seems to be easy to get in the desired range of 100-120. The readings at the lowest limits reflect some of our beta testers who just opened their pools and before any chemical treatment.

Here Comes the Rain Again

We wanted to share with you these findings because, at Sutro, we’re trying to build a simple, safe, and seamless way for people to measure, monitor, and adjust treatments for their pool (or spa) water. As such, the more data and feedback we can get on our system, the better it will be.

As such, these are some of the take-a-ways we found from our data analysis so far.

- Weather plays a big role in maintaining healthy water

- It takes several days or even a week or two to open a pool. This is especially the case in the northeast

- The range of pH when a pool is opened can be as low as 6.2. Our system currently has a lower limit of 6.8. It’s something we’re looking into fixing

- We’ve also seen one or two Total Alkalinity measurements reaching as high as 200

- Adding various sanitizers and clarifiers may wreak havoc on Alkalinity measurements

Overall, “challenging” is an understatement when it comes to keeping your water safe and at the right level of chemicals.

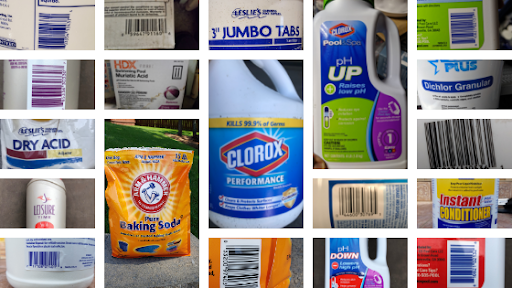

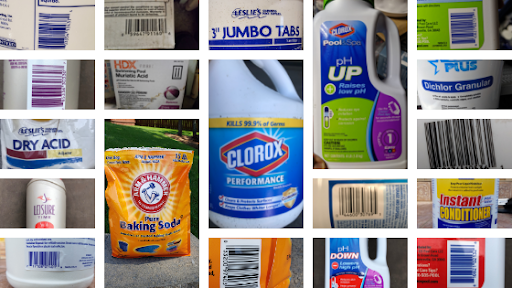

A Cacophony of Chemicals

Of the pool chemicals uploaded, approximately half are unique, meaning that our participants use a wide variety of chemicals to treat their water. This is understandable given the wide geography that participants come from. We specifically requested pictures of the front labels as well as the Universal Price Code (UPC) labels. Some interesting observations immediately caught our eye.

- The most popular brands are Leslies and Clorox but their products, even within the same brand, come in various sizes

- Many folks use off-the-shelf products like Arm & Hammer baking soda. And, just to make things more difficult for us, that particular product is sold in several different weight boxes.

- The chemical packaging can be very heavy. In some cases, people have 50-pound boxes of chemicals.

- Some retailers like to hide the UPC codes by replacing them with their own proprietary stickers. This surely hinders our efforts.

- Some manufacturers like to use their own euphemisms for “increasers” and “decreasers”. Parsing words like “UP”, “Increaser”, “Xtra”, “Extra”, “Super”, etc. makes our lives a challenging dilemma. “Blue” and “Xtra Blue” are dancing in our dreams.

- Who knew there were so many words to describe “Shock”? What’s the difference between “super shock”, “ultimate shock”, and “triple action shock”?

- Where else in society does a three inch product classify as “Jumbo”?

- Phosphates, calcium, and unpronounceable terms like Sodium dichloro-s-triazinetrione dihydrate, Potassium Peroxymonosulfate, didecyl-dimethyl-ammonium chloride are challenging enough but what happens when you encounter “1,3-dichloro-5,5-dimethylhydantoin”

Want to Help Us Get Smarter?

The response to helping us make our system smarter has been stellar. Thanks to all of you who have been patient with us and sending in your chemical photos. We’re always looking to improve so if you’d like to help us, please go here and send us pictures of your pool or spa chemicals.